TerraSeg: Self-Supervised Ground Segmentation for Any LiDAR

🏆 Accepted to IEEE/CVF CVPR 2026! Looking forward to presenting at Denver, Colorado.

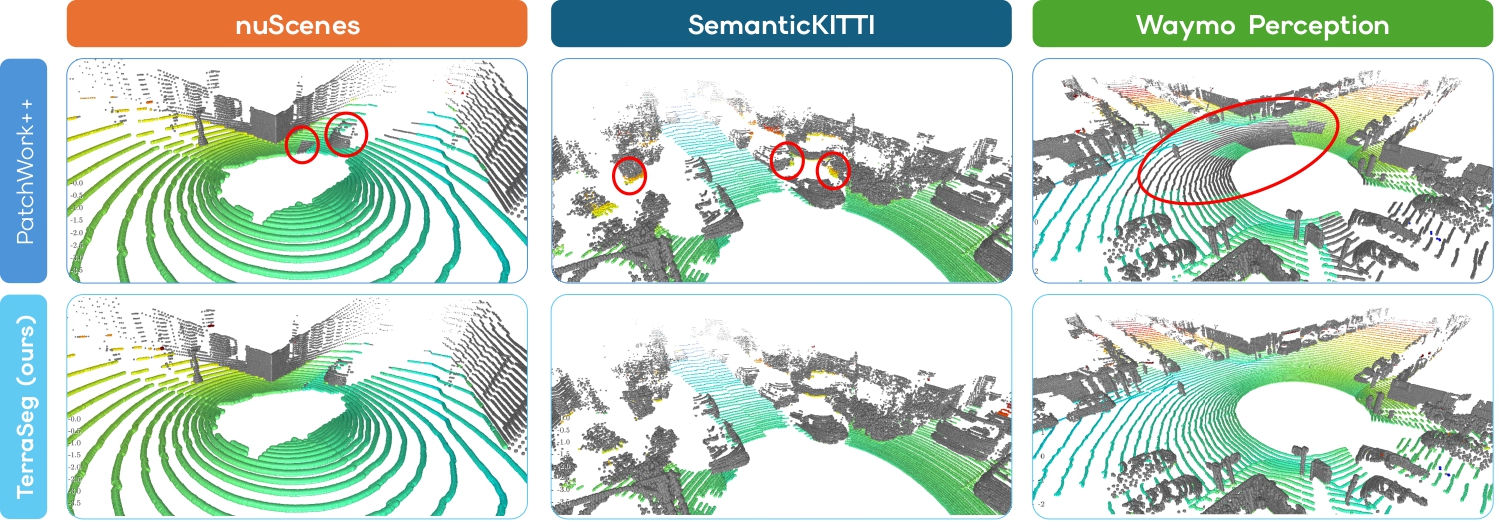

Ground segmentation for TerraSeg and baseline PatchWork++: qualitateive comparison between the two methods on the nuScenes, SemanticKITTI and Waymo Perception datasets.

📝 Abstract

LiDAR perception is fundamental to robotics, enabling machines to understand their environment in 3D. A crucial task for LiDAR-based scene understanding and navigation is ground segmentation. However, existing methods are either handcrafted for specific sensor configurations or rely on costly per-point manual labels, severely limiting their generalization and scalability.

To overcome this, we introduce TerraSeg, the first self-supervised, domain-agnostic model for LiDAR ground segmentation. We train TerraSeg on OmniLiDAR, a unified large-scale dataset that aggregates and standardizes data from 12 major public benchmarks. Spanning almost 22 million raw scans across 15 distinct sensor models, OmniLiDAR provides unprecedented diversity for learning a highly generalizable ground model. To supervise training without human annotations, we propose PseudoLabeler, a novel module that generates high-quality ground and non-ground labels through self-supervised per-scan runtime optimization.

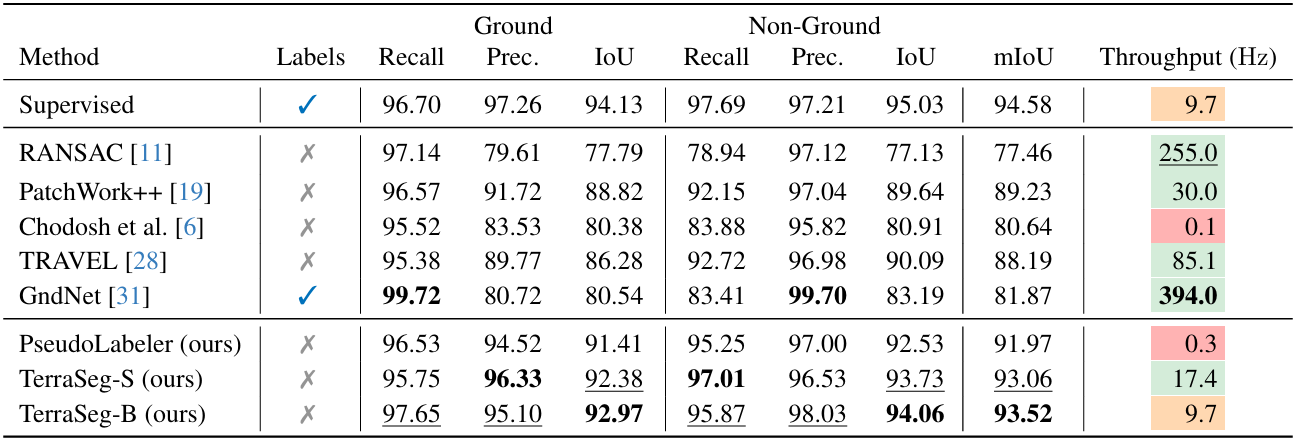

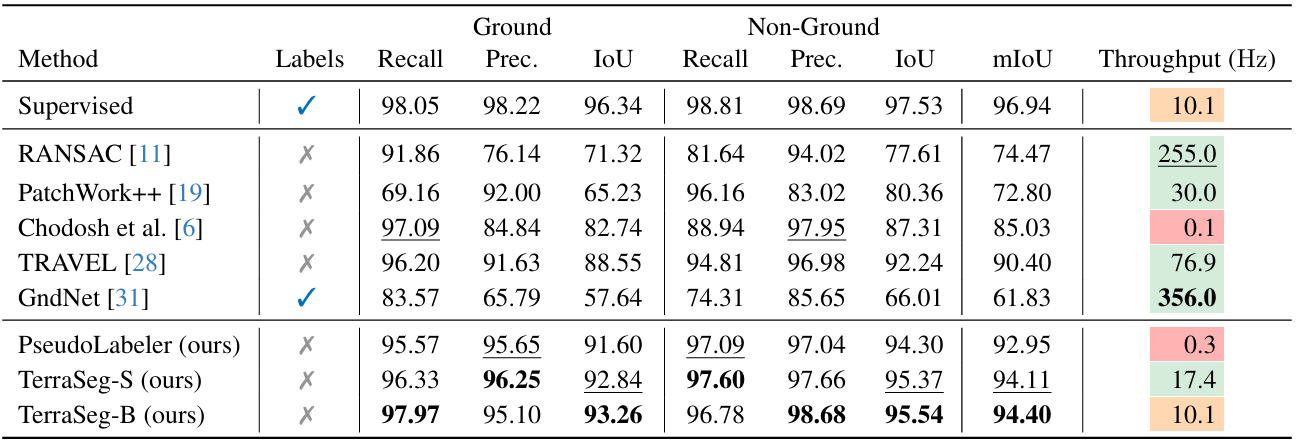

Extensive evaluations demonstrate that, despite using no manual labels, TerraSeg achieves state-of-the-art results on nuScenes, SemanticKITTI, and Waymo Perception while delivering real-time performance.

⭐ Key Contributions

- OmniLiDAR: unifies 12 datasets into ~22M standardized multi-sensor scans, enabling large-scale cross-domain generalization without sensor-specific tuning.

- PseudoLabeler: generates high-quality ground pseudo-labels via per-scan self-supervised optimization, removing the need for manual annotations.

- TerraSeg: first self-supervised, domain-agnostic LiDAR ground segmentation model, achieving SOTA performance with real-time inference.

🔬 Method

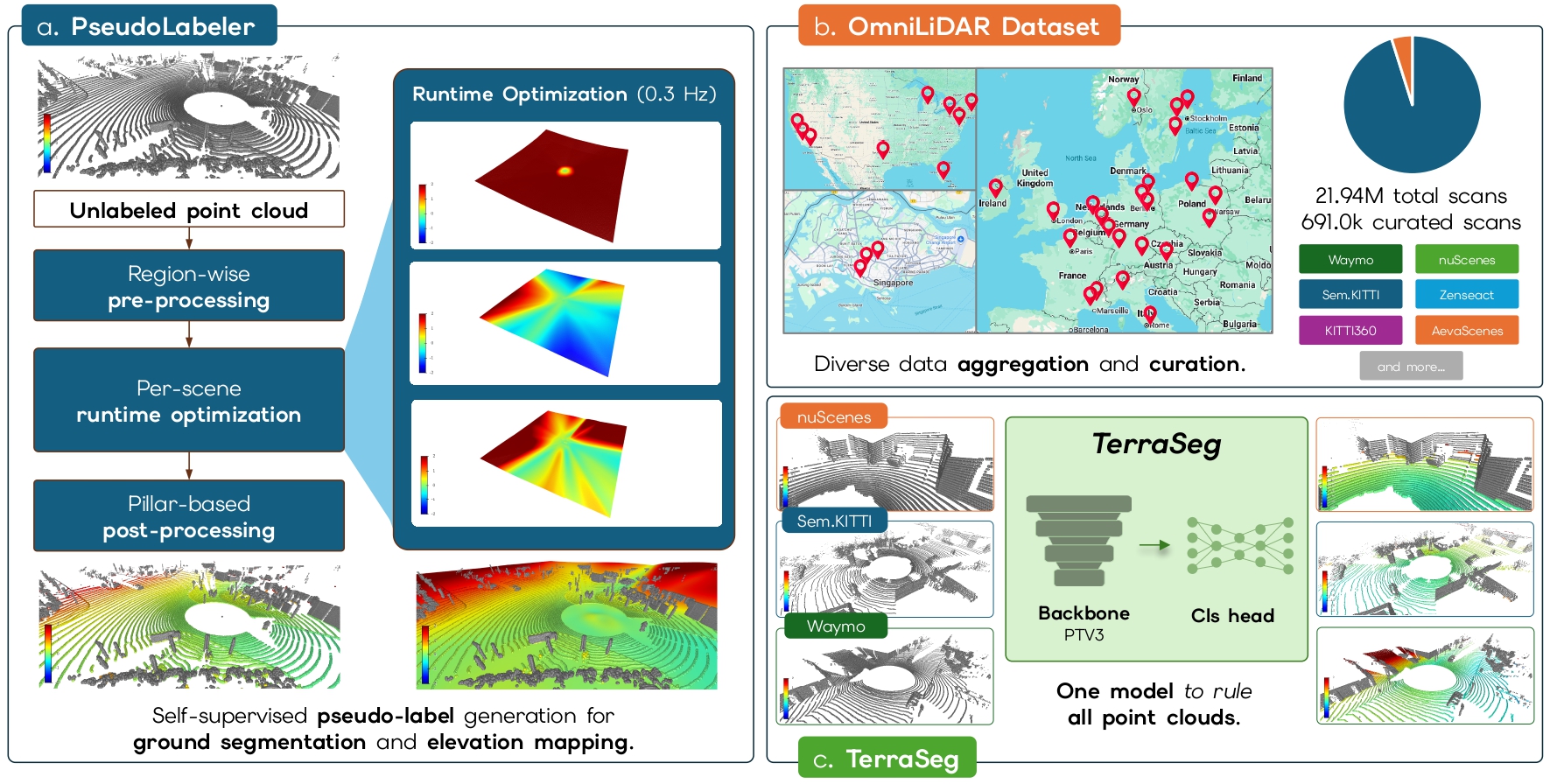

We propose a fully self-supervised pipeline for LiDAR ground segmentation, built around three key components: OmniLiDAR, PseudoLabeler, and TerraSeg.

We first construct OmniLiDAR, a large-scale and diverse dataset by aggregating raw LiDAR scans from 12 public autonomous driving benchmarks. This results in nearly 22 million scans spanning 15 different LiDAR sensor models. To ensure cross-domain consistency, we standardize all scans into a unified coordinate system, remove ego-vehicle points, and retain only geometric information (x, y, z). We deliberately process each scan independently, without temporal fusion, encouraging the model to learn generalizable geometric priors that transfer across sensors and environments.

To eliminate the need for manual annotations, we introduce PseudoLabeler, a self-supervised labeling module. For each scan, we fit a smooth ground surface by optimizing a lightweight MLP directly on the raw point cloud. We define a robust asymmetric loss that penalizes points below the surface while ignoring outliers above it. Ground labels are then obtained by thresholding the vertical residuals. To improve label quality, we incorporate (1) pre-processing to remove noisy low outliers, (2) stable runtime optimization with early stopping, and (3) pillar-based post-processing to correct misclassified object bases. This allows us to generate high-quality pseudo-labels at scale.

Finally, we train TerraSeg, a domain-agnostic neural network for ground segmentation. Our model builds on a Point Transformer v3 backbone, adapted to improve cross-sensor generalization by using simple geometric features and group normalization. We train it using a combination of binary cross-entropy and Lovász loss on the pseudo-labels. We provide both an accurate and a real-time variant.

By decoupling offline pseudo-label generation from efficient model inference, our approach achieves scalable, real-time, and state-of-the-art performance without any manual labels.

Overview of TerraSeg. (a) PseudoLabeler generates self-supervised point-wise ground/non-ground labels per raw LiDAR scan. (b) OmniLiDAR unifies 12 major autonomous driving datasets within an aggregated framework, yielding a diverse curated corpus drawn from nearly 22 million raw scans. (c) TerraSeg is a real-time, domain-agnostic model for ground segmentation, trained on OmniLiDAR using these self-supervised pseudo-labels.

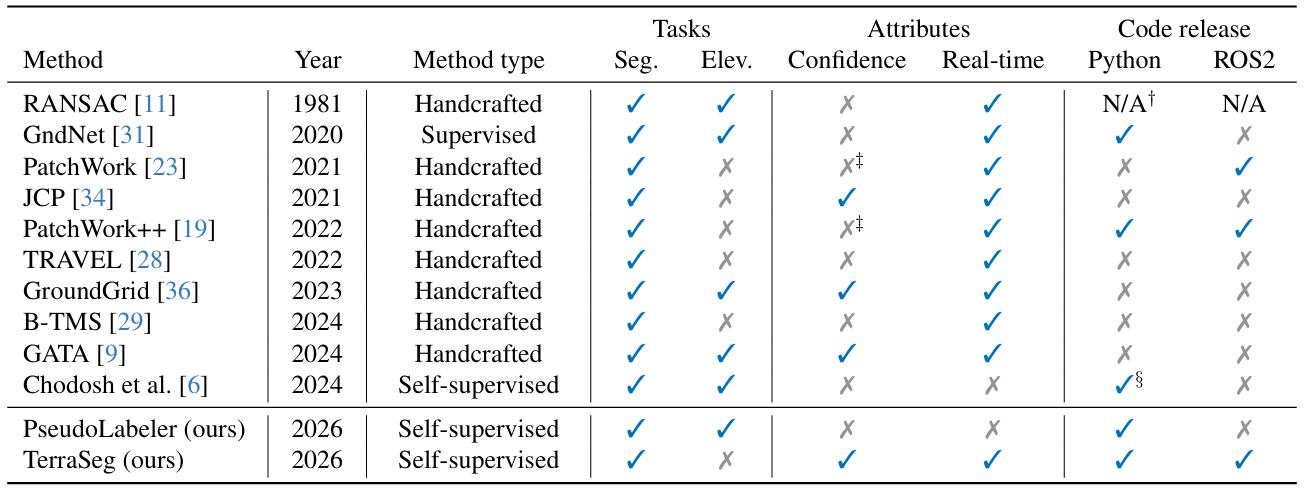

Table I. Comparison and overview of main methods for ground segmentation. We effectively demonstrate that TerraSeg is the only real-time, domain-agnostic solution from a conceptual standpoint.

📊 Results

We present qualitative results across the nuScenes, SemanticKITTI, and Waymo Perception datasets, demonstrating that TerraSeg achieves state-of-the-art performance in LiDAR ground segmentation without any manual labels across a wide variety of sensor configurations and environments.

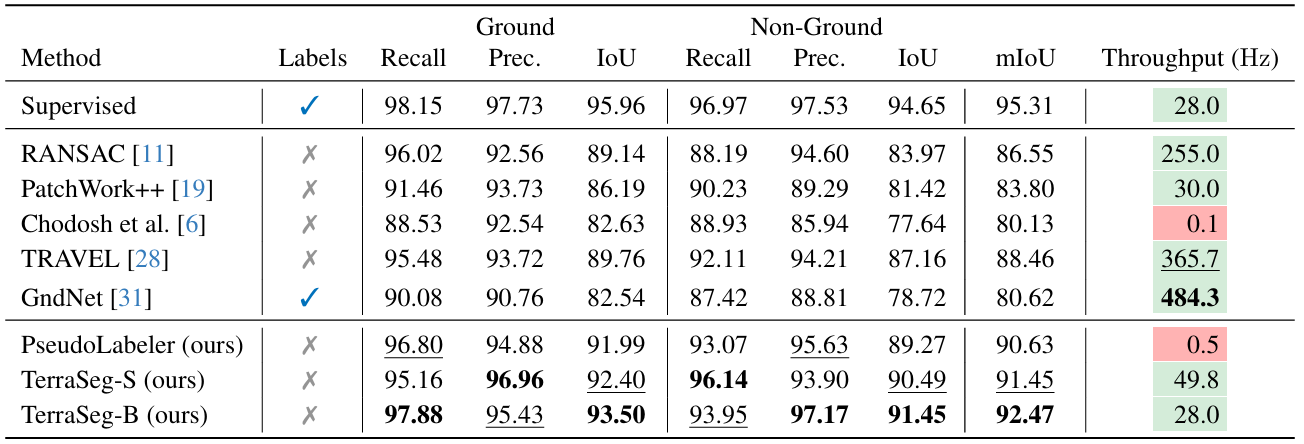

Table II. LiDAR ground segmentation on nuScenes validation split.

Table III. LiDAR ground segmentation on SemanticKITTI validation split.

Table IV. LiDAR ground segmentation on Waymo Perception validation split.

📚 Citation

@article{lentsch2026terraseg,

title = {TerraSeg: Self-Supervised Ground Segmentation for Any LiDAR},

author = {Lentsch, Ted and Montiel-Marín, Santiago and

Caesar, Holger and Gavrila, Dariu},

year = {2026},

eprint = {2603.27344},

archivePrefix = {arXiv},

primaryClass = {cs.CV},

}🤝 Acknowledgements

This research has been conducted as part of the EVENTS project, which is funded by the European Union, under grant agreement No 101069614. Views and opinions ex pressed are, however, those of the author(s) only and do not necessarily reflect those of the European Union or European Commission. Neither the European Union nor the granting authority can be held responsible for them. This work has also been supported by project PID2024-161576OB-I00, funded by Spanish MICIU/AEI/10.13039/501100011033 and co-funded by the European Regional Development Fund (ERDF, “A way of making Europe”).